It’s been one of those weeks. One of those years, actually – David Bowie *and* Leonard Cohen. Listening to ‘Democracy‘ as I write this.

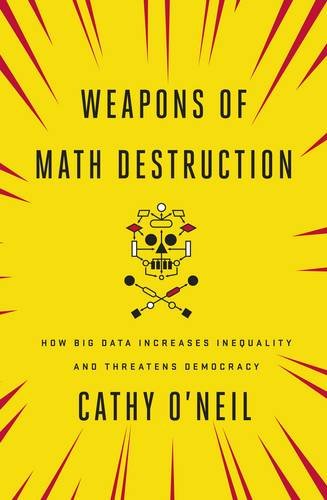

Still, I have managed to read Cathy O’Neil’s excellent Weapons of Math Destruction, about the devastation algorithms in the hands of the powerful are wreaking on the social fabric. “Big data processes codify the past. They do not invent the future. Doing that requires moral imagination, and that’s something only humans can provide. Sometimes that will mea putting fairness ahead of profit.”

The book’s chapters explore different contexts in which algorithms are crunching big data, sucked out of all of our recorded behaviours, to take the human judgement out of decision-taking, whether that’s employing people, insuring them or giving them a loan, sentencing them in court (America, friends), ranking universities and colleges, ranking and firing teachers….. in fact, the scope of algorithmic power is increasing rapidly. The problems boil down to two very fundamental points.

One is that often the data on a particular behaviour or characteristic is not observed, or unobservable – dedication to work, say, or trustworthiness. So proxies have to be used. Past health records? Postcode? But this encodes unfairness against individuals, those who are reliable even though living on a bad estate, and does so automatically with no transparancey and no redress.

The other is that there is a self-reinforcing dynamic in the use of algorithms. Take the example of the US News US college ranking. Students will aim to get into those with a high ranking, so they have to do more of whatever it takes to get a high ranking, and that will bring them more students, and more chance of improving their ranking. Too bad that the ranking depends on specific numbers: SAT scores of incoming freshmen, graduation rates and so on. These seemed perfectly sensible, but when the rankings they feed into are the only thing that potential students look at, institutions cheat and game to improve these metrics. This is the adverse effect of target setting on addictive crystal meth. Destructive feedback loops are inevitable, O’Neil points out, whenever numerical proxies are used for the criteria of interest, and the algorithm is a black box with no humans intervening in the feedback loops.

The book is particularly strong on the way apparently objective scoring systems are embedding social and economic disadvantage. When the police look at big data to decide which areas to police more harshly, the evidence of past arrests takes them to poor areas. A negative feedback loop – they are there more, they arrest more people for minor misdemeanours, the data confirms the area as more crime-ridden. “We criminalize poverty, believing all the while that our tools are not only scientific but fair.” Credit scoring algorithms, those evaluating teachers using inadequate underlying models, ad sales targetting the vulnerable – the world of big data and algos is devastating the lives of people on low incomes. Life has always been unfair. It is now unfair at lightning speed and wearing a cloak of spurious scientific accuracy.

O’Neil argues that legal restraints are needed on the use of algorithmic decision-making by both government agencies and the private sector. The market will not be able to end this arms race, or even want to as it is profitable.

This is a question of justice, she argues. The book is vague on specifics, calling for transparency as to what goes in to the black boxes and a regulatory system. I don’t know how that might work. I do know that until we get effective regulation, those using big data – including especially the titans like Facebook and Google – have a special responsibility to consider the consequences.